Jun 26 2018

Image credit: QUT

Image credit: QUT

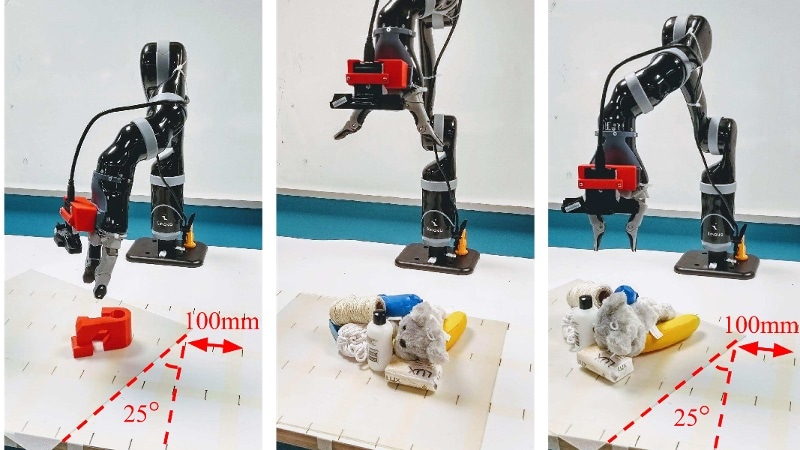

Roboticists from QUT have designed a more accurate and more rapid technique for robots to grab objects, for instance, in changing and cluttered environments, opening the door for enhancing their usefulness in domestic as well as industrial settings.

- The innovative technique enables a robot to rapidly scan the environment and map each pixel captured by it against its grasp quality with the help of a depth image

- Real-world investigations have realized high accuracy rates of nearly 88% for dynamic grasping and nearly 92% in static experiments

- The technique is based on a Generative Grasping Convolutional Neural Network

Dr Jürgen Leitner from QUT stated that although grasping and picking up an object was a fundamental task for humans, it had been observed to be unbelievably challenging for machines.

We have been able to program robots, in very controlled environments, to pick up very specific items. However, one of the key shortcomings of current robotic grasping systems is the inability to quickly adapt to change, such as when an object gets moved. The world is not predictable—things change and move and get mixed up and, often, that happens without warning—so robots need to be able to adapt and work in very unstructured environments if we want them to be effective.

Dr Jürgen Leitner, Science and Engineering Faculty, QUT

The innovative technique, developed by PhD researcher Douglas Morrison, Dr Leitner, and Distinguished Professor Peter Corke from QUT’s Science and Engineering Faculty, is a real-time, object-independent grasp synthesis technique for closed-loop grasping.

The Generative Grasping Convolutional Neural Network approach works by predicting the quality and pose of a two-fingered grasp at every pixel. By mapping what is in front of it using a depth image in a single pass, the robot doesn’t need to sample many different possible grasps before making a decision, avoiding long computing times. In our real-world tests, we achieved an 83% grasp success rate on a set of previously unseen objects with adversarial geometry and 88% on a set of household objects that were moved during the grasp attempt. We also achieve 81% accuracy when grasping in dynamic clutter.

Douglas Morrison, PhD Researcher, Science and Engineering Faculty, QUT

Dr Leitner stated that the technique overcame several limitations of existing deep-learning grasping methods.

For example, in the Amazon Picking Challenge, which our team won in 2017, our robot CartMan would look into a bin of objects, make a decision on where the best place was to grasp an object and then blindly go in to try to pick it up. Using this new method, we can process images of the objects that a robot views within about 20 milliseconds, which allows the robot to update its decision on where to grasp an object and then do so with much greater purpose. This is particularly important in cluttered spaces.

Dr Jürgen Leitner, Science and Engineering Faculty, QUT

Dr Leitner stated that the advancements would be advantageous for industrial automation as well as in domestic settings.

This line of research enables us to use robotic systems not just in structured settings where the whole factory is built based on robotic capabilities. It also allows us to grasp objects in unstructured environments, where things are not perfectly planned and ordered, and robots are required to adapt to change. This has benefits for industry—from warehouses for online shopping and sorting, through to fruit picking. It could also be applied in the home, as more intelligent robots are developed to not just vacuum or mop a floor, but also to pick items up and put them away.

Dr Jürgen Leitner, Science and Engineering Faculty, QUT

The team’s paper titled “Closing the Loop for Robotic Grasping: A Real-time, Generative Grasp Synthesis Approach” will be presented this week at Robotics: Science and Systems, the most selective international robotics conference, being held at Carnegie Mellon University in Pittsburgh USA.

The Australian Centre for Robotic Vision supported the study.