May 30 2019

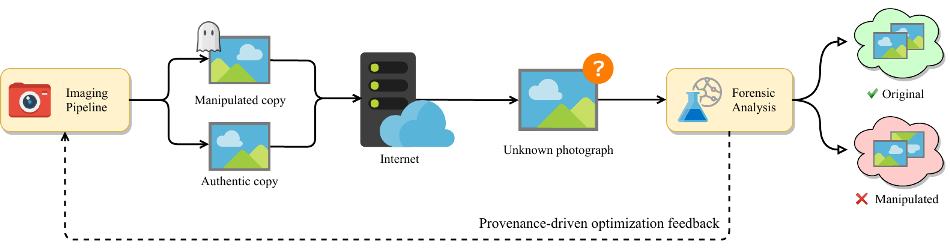

Scientists at the NYU Tandon School of Engineering have come up with an experimental method to validate images throughout the whole pipeline, from acquisition to delivery, using artificial intelligence (AI) in order to prevent advanced techniques of modifying photos and video.

(Image credit: NYU Tandon School of Engineering)

(Image credit: NYU Tandon School of Engineering)

In experiments, this prototype imaging pipeline increased the odds of spotting manipulation from approximately 45% to more than 90% without compromising image quality.

Establishing whether a photo or video is genuine is becoming more and more problematic. Sophisticated methods for modifying photos and videos have become easily accessible that so-called “deep fakes” — exploited photos or videos that are highly convincing and every so often include political figures or celebrities — have become routine.

Paweł Korus, a research assistant professor in the Department of Computer Science and Engineering at NYU Tandon, initiated this method. It substitutes the characteristic photo development pipeline with a neural network — one form of AI — that adds carefully crafted artifacts straight into the image at the instant of image acquisition. These artifacts, similar to "digital watermarks," are very sensitive to manipulation.

Unlike previously used watermarking techniques, these AI-learned artifacts can reveal not only the existence of photo manipulations, but also their character.

Paweł Korus, Research Assistant Professor, Department of Computer Science and Engineering, NYU Tandon

The process is enhanced for in-camera embedding and can endure image alteration applied by online photo sharing services.

The benefits of incorporating such systems into cameras are obvious.

“If the camera itself produces an image that is more sensitive to tampering, any adjustments will be detected with high probability,” said Nasir Memon, a professor of computer science and engineering at NYU Tandon and co-author, with Korus, of a paper describing the method. “These watermarks can survive post-processing; however, they’re quite fragile when it comes to modification: If you alter the image, the watermark breaks,” Memon said.

Most other efforts to prove image authenticity inspect only the end product — an especially difficult task.

Korus and Memon, by contrast, rationalized that contemporary digital imaging already depends on machine learning. Every photo captured on a smartphone goes through near-instantaneous processing to fine-tune for low light and to steady images, both of which happen because of onboard AI. In the years ahead, AI-driven processes are expected to completely replace the traditional digital imaging pipelines. As this evolution takes place, Memon said that “we have the opportunity to dramatically change the capabilities of next-generation devices when it comes to image integrity and authentication. Imaging pipelines that are optimized for forensics could help restore an element of trust in areas where the line between real and fake can be difficult to draw with confidence.”

Korus and Memon observe that while their method holds promise in testing, more work is required to improve the system. The researchers will present their paper titled, “Content Authentication for Neural Imaging Pipelines: End-to-end Optimization of Photo Provenance in Complex Distribution Channels,” at the Conference on Computer Vision and Pattern Recognition in Long Beach, California, this June.